Oracle-Listener log解读_oracle listener.log-程序员宅基地

技术标签: oracle log 日志监听文件 【Oracle基础】 数据库 listener-l

Listener log 概述

在ORACLE数据库中,如果不对监听日志文件(listener.log)进行截断,那么监听日志文件(listener.log)会变得越来越大.

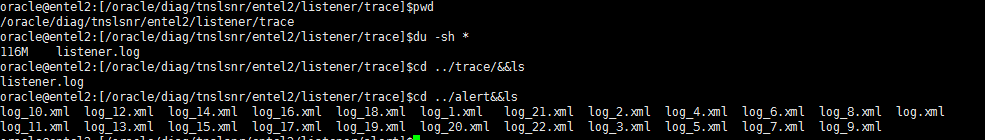

Listener log location

For oracle 9i/10g

在下面的目录下:

$ORACLE_HOME/network/log/listener_$ORACLE_SID.log

For oracle 11g/12c

在下面的目录下:

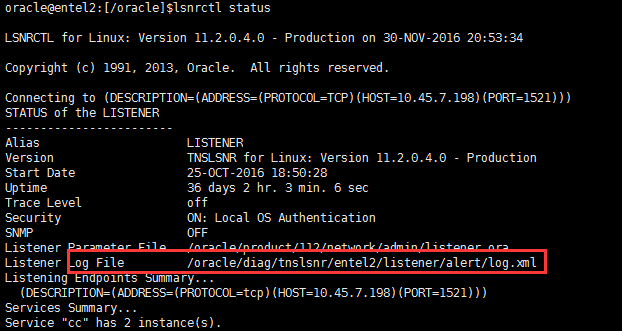

$ORACLE_BASE/diag/tnslsnr/主机名称/listener/trace/listener.log或者通过 lsnrctl status 也可以查看位置

这里展示的是 xml格式的日志,跟.log并无区别。

或者11g可以通过 adrci 命令

oracle@entel2:[/oracle]$adrci

ADRCI: Release 11.2.0.4.0 - Production on Wed Nov 30 20:56:28 2016

Copyright (c) 1982, 2011, Oracle and/or its affiliates. All rights reserved.

ADR base = "/oracle"

adrci> help --help可以看帮助命令。输入help show alert,可以看到show alert的详细用法

HELP [topic]

Available Topics:

CREATE REPORT

ECHO

EXIT

HELP

HOST

IPS

PURGE

RUN

SET BASE

SET BROWSER

SET CONTROL

SET ECHO

SET EDITOR

SET HOMES | HOME | HOMEPATH

SET TERMOUT

SHOW ALERT

SHOW BASE

SHOW CONTROL

SHOW HM_RUN

SHOW HOMES | HOME | HOMEPATH

SHOW INCDIR

SHOW INCIDENT

SHOW PROBLEM

SHOW REPORT

SHOW TRACEFILE

SPOOL

There are other commands intended to be used directly by Oracle, type

"HELP EXTENDED" to see the list

adrci> show alert --显示alert信息

Choose the alert log from the following homes to view:

1: diag/clients/user_oracle/host_880756540_80

2: diag/tnslsnr/procsdb2/listener_cc

3: diag/tnslsnr/entel2/sid_list_listener

4: diag/tnslsnr/entel2/listener_rb

5: diag/tnslsnr/entel2/listener

6: diag/tnslsnr/entel2/listener_cc

7: diag/tnslsnr/procsdb1/listener_rb

8: diag/rdbms/ccdg/ccdg

9: diag/rdbms/rb/rb

10: diag/rdbms/cc/cc

Q: to quit

Please select option: 5 --输入数字,查看对应日志

Output the results to file: /tmp/alert_13187_1397_listener_3.ado

2016-06-27 09:15:45.164000 -04:00

Create Relation ADR_CONTROL

Create Relation ADR_INVALIDATION

Create Relation INC_METER_IMPT_DEF

2016-06-27 09:15:46.444000 -04:00

Create Relation INC_METER_PK_IMPTS

System parameter file is /oracle/product/112/network/admin/listener.ora

Log messages written to /oracle/diag/tnslsnr/entel2/listener/alert/log.xml

Trace information written to /oracle/diag/tnslsnr/entel2/listener/trace/ora_16175_140656975550208.trc

Trace level is currently 0

Started with pid=16175

Listening on: (DESCRIPTION=(ADDRESS=(PROTOCOL=tcp)(HOST=10.45.7.198)(PORT=1521)))

Listening on: (DESCRIPTION=(ADDRESS=(PROTOCOL=tcp)(HOST=192.168.123.1)(PORT=1521)))

Listener completed notification to CRS on start

......

......

......

Listener log日志文件清理

需要对监听日志文件(listener.log)进行定期清理。

1:监听日志文件(listener.log)变得越来越大,占用额外的存储空间

2:监听日志文件(listener.log)变得太大会带来一些问题,查找起来也相当麻烦

3:监听日志文件(listener.log)变得太大,给写入、查看带来的一些性能问题、麻烦

定期对监听日志文件(listener.log)进行清理,另外一种说法叫截断日志文件。

列举一个错误的做法

oracle@entel2:[/oracle]$mv listener.log listener.log.20161201

oracle@entel2:[/oracle]$cp /dev/null listener.log

oracle@entel2:[/oracle]$more listener.log如上所示,这样截断监听日志(listener.log)后,监听服务进程(tnslsnr)并不会将新的监听信息写入listener.log,而是继续写入listener.log.20161201

正确的做法

1:首先停止监听服务进程(tnslsnr)记录日志。

oracle@entel2:[/oracle]$lsnrctl set log_status off2:将监听日志文件(listener.log)复制一份,以listener.log.yyyymmdd格式命名

oracle@entel2:[/oracle]$cp listener.log listener.log.201612013:将监听日志文件(listener.log)清空。清空文件的方法有很多

oracle@entel2:[/oracle]$echo “” > listener.log

或者

oracle@entel2:[/oracle]$cp /dev/null listener.log

或者

oracle@entel2:[/oracle]$echo /dev/null > listener.log

或者

oracle@entel2:[/oracle]$>listener.log4:开启监听服务进程(tnslsnr)记录日志

oracle@entel2:[/oracle]$lsnrctl set log_status on当然也可以移走监听日志文件(listener.log),数据库实例会自动创建一个listener.log文件。

oracle@entel2:[/oracle]$ lsnrctl set log_status off

oracle@entel2:[/oracle]$mv listener.log listener.yyyymmdd

oracle@entel2:[/oracle]$lsnrctl set log_status on

清理shell脚本

当然这些操作应该通过shell脚本来处理,然后结合crontab作业定期清理、截断监听日志文件。

简单一点的(核心部分)

rq=` date +"%d" `

cp $ORACLE_HOME/network/log/listener.log $ORACLE_BACKUP/network/log/listener_$rq.log

su - oracle -c "lsnrctl set log_status off"

cp /dev/null $ORACLE_HOME/network/log/listener.log

su - oracle -c "lsnrctl set log_status on"

这样的脚本还没有解决一个问题,就是截断的监听日志文件保留多久的问题。比如我只想保留这些截断的监听日志一个月时间,我希望作业自动维护。不需要我去手工操作。有这样一个脚本cls_oracle.sh可以完全做到这个,当然它还会归档、清理其它日志文件,例如告警文件(alert_sid.log)等等。功能非常强大。

#!/bin/bash

#

# Script used to cleanup any Oracle environment.

#

# Cleans: audit_log_dest

# background_dump_dest

# core_dump_dest

# user_dump_dest

#

# Rotates: Alert Logs

# Listener Logs

#

# Scheduling: 00 00 * * * /home/oracle/_cron/cls_oracle/cls_oracle.sh -d 31 > /home/oracle/_cron/cls_oracle/cls_oracle.log 2>

&1

#

# Created By: Tommy Wang 2012-09-10

#

# History:

#

RM="rm -f"

RMDIR="rm -rf"

LS="ls -l"

MV="mv"

TOUCH="touch"

TESTTOUCH="echo touch"

TESTMV="echo mv"

TESTRM=$LS

TESTRMDIR=$LS

SUCCESS=0

FAILURE=1

TEST=0

HOSTNAME=`hostname`

ORAENV="oraenv"

TODAY=`date +%Y%m%d`

ORIGPATH=/usr/local/bin:$PATH

ORIGLD=$LD_LIBRARY_PATH

export PATH=$ORIGPATH

# Usage function.

f_usage(){

echo "Usage: `basename $0` -d DAYS [-a DAYS] [-b DAYS] [-c DAYS] [-n DAYS] [-r DAYS] [-u DAYS] [-t] [-h]"

echo " -d = Mandatory default number of days to keep log files that are not explicitly passed as parameters."

echo " -a = Optional number of days to keep audit logs."

echo " -b = Optional number of days to keep background dumps."

echo " -c = Optional number of days to keep core dumps."

echo " -n = Optional number of days to keep network log files."

echo " -r = Optional number of days to keep clusterware log files."

echo " -u = Optional number of days to keep user dumps."

echo " -h = Optional help mode."

echo " -t = Optional test mode. Does not delete any files."

}

if [ $# -lt 1 ]; then

f_usage

exit $FAILURE

fi

# Function used to check the validity of days.

f_checkdays(){

if [ $1 -lt 1 ]; then

echo "ERROR: Number of days is invalid."

exit $FAILURE

fi

if [ $? -ne 0 ]; then

echo "ERROR: Number of days is invalid."

exit $FAILURE

fi

}

# Function used to cut log files.

f_cutlog(){

# Set name of log file.

LOG_FILE=$1

CUT_FILE=${LOG_FILE}.${TODAY}

FILESIZE=`ls -l $LOG_FILE | awk '{print $5}'`

# Cut the log file if it has not been cut today.

if [ -f $CUT_FILE ]; then

echo "Log Already Cut Today: $CUT_FILE"

elif [ ! -f $LOG_FILE ]; then

echo "Log File Does Not Exist: $LOG_FILE"

elif [ $FILESIZE -eq 0 ]; then

echo "Log File Has Zero Size: $LOG_FILE"

else

# Cut file.

echo "Cutting Log File: $LOG_FILE"

$MV $LOG_FILE $CUT_FILE

$TOUCH $LOG_FILE

fi

}

# Function used to delete log files.

f_deletelog(){

# Set name of log file.

CLEAN_LOG=$1

# Set time limit and confirm it is valid.

CLEAN_DAYS=$2

f_checkdays $CLEAN_DAYS

# Delete old log files if they exist.

find $CLEAN_LOG.[0-9][0-9][0-9][0-9][0-9][0-9][0-9][0-9] -type f -mtime +$CLEAN_DAYS -exec $RM {} \; 2>/dev/null

}

# Function used to get database parameter values.

f_getparameter(){

if [ -z "$1" ]; then

return

fi

PARAMETER=$1

sqlplus -s /nolog <<EOF | awk -F= "/^a=/ {print \$2}"

set head off pagesize 0 feedback off linesize 200

whenever sqlerror exit 1

conn / as sysdba

select 'a='||value from v\$parameter where name = '$PARAMETER';

EOF

}

# Function to get unique list of directories.

f_getuniq(){

if [ -z "$1" ]; then

return

fi

ARRCNT=0

MATCH=N

x=0

for e in `echo $1`; do

if [ ${#ARRAY[*]} -gt 0 ]; then

# See if the array element is a duplicate.

while [ $x -lt ${#ARRAY[*]} ]; do

if [ "$e" = "${ARRAY[$x]}" ]; then

MATCH=Y

fi

done

fi

if [ "$MATCH" = "N" ]; then

ARRAY[$ARRCNT]=$e

ARRCNT=`expr $ARRCNT+1`

fi

x=`expr $x + 1`

done

echo ${ARRAY[*]}

}

# Parse the command line options.

while getopts a:b:c:d:n:r:u:th OPT; do

case $OPT in

a) ADAYS=$OPTARG

;;

b) BDAYS=$OPTARG

;;

c) CDAYS=$OPTARG

;;

d) DDAYS=$OPTARG

;;

n) NDAYS=$OPTARG

;;

r) RDAYS=$OPTARG

;;

u) UDAYS=$OPTARG

;;

t) TEST=1

;;

h) f_usage

exit 0

;;

*) f_usage

exit 2

;;

esac

done

shift $(($OPTIND - 1))

# Ensure the default number of days is passed.

if [ -z "$DDAYS" ]; then

echo "ERROR: The default days parameter is mandatory."

f_usage

exit $FAILURE

fi

f_checkdays $DDAYS

echo "`basename $0` Started `date`."

# Use test mode if specified.

if [ $TEST -eq 1 ]

then

RM=$TESTRM

RMDIR=$TESTRMDIR

MV=$TESTMV

TOUCH=$TESTTOUCH

echo "Running in TEST mode."

fi

# Set the number of days to the default if not explicitly set.

ADAYS=${ADAYS:-$DDAYS}; echo "Keeping audit logs for $ADAYS days."; f_checkdays $ADAYS

BDAYS=${BDAYS:-$DDAYS}; echo "Keeping background logs for $BDAYS days."; f_checkdays $BDAYS

CDAYS=${CDAYS:-$DDAYS}; echo "Keeping core dumps for $CDAYS days."; f_checkdays $CDAYS

NDAYS=${NDAYS:-$DDAYS}; echo "Keeping network logs for $NDAYS days."; f_checkdays $NDAYS

RDAYS=${RDAYS:-$DDAYS}; echo "Keeping clusterware logs for $RDAYS days."; f_checkdays $RDAYS

UDAYS=${UDAYS:-$DDAYS}; echo "Keeping user logs for $UDAYS days."; f_checkdays $UDAYS

# Check for the oratab file.

if [ -f /var/opt/oracle/oratab ]; then

ORATAB=/var/opt/oracle/oratab

elif [ -f /etc/oratab ]; then

ORATAB=/etc/oratab

else

echo "ERROR: Could not find oratab file."

exit $FAILURE

fi

# Build list of distinct Oracle Home directories.

OH=`egrep -i ":Y|:N" $ORATAB | grep -v "^#" | grep -v "\*" | cut -d":" -f2 | sort | uniq`

# Exit if there are not Oracle Home directories.

if [ -z "$OH" ]; then

echo "No Oracle Home directories to clean."

exit $SUCCESS

fi

# Get the list of running databases.

SIDS=`ps -e -o args | grep pmon | grep -v grep | awk -F_ '{print $3}' | sort`

# Gather information for each running database.

for ORACLE_SID in `echo $SIDS`

do

# Set the Oracle environment.

ORAENV_ASK=NO

export ORACLE_SID

. $ORAENV

if [ $? -ne 0 ]; then

echo "Could not set Oracle environment for $ORACLE_SID."

else

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:$ORIGLD

ORAENV_ASK=YES

echo "ORACLE_SID: $ORACLE_SID"

# Get the audit_dump_dest.

ADUMPDEST=`f_getparameter audit_dump_dest`

if [ ! -z "$ADUMPDEST" ] && [ -d "$ADUMPDEST" 2>/dev/null ]; then

echo " Audit Dump Dest: $ADUMPDEST"

ADUMPDIRS="$ADUMPDIRS $ADUMPDEST"

fi

# Get the background_dump_dest.

BDUMPDEST=`f_getparameter background_dump_dest`

echo " Background Dump Dest: $BDUMPDEST"

if [ ! -z "$BDUMPDEST" ] && [ -d "$BDUMPDEST" ]; then

BDUMPDIRS="$BDUMPDIRS $BDUMPDEST"

fi

# Get the core_dump_dest.

CDUMPDEST=`f_getparameter core_dump_dest`

echo " Core Dump Dest: $CDUMPDEST"

if [ ! -z "$CDUMPDEST" ] && [ -d "$CDUMPDEST" ]; then

CDUMPDIRS="$CDUMPDIRS $CDUMPDEST"

fi

# Get the user_dump_dest.

UDUMPDEST=`f_getparameter user_dump_dest`

echo " User Dump Dest: $UDUMPDEST"

if [ ! -z "$UDUMPDEST" ] && [ -d "$UDUMPDEST" ]; then

UDUMPDIRS="$UDUMPDIRS $UDUMPDEST"

fi

fi

done

# Do cleanup for each Oracle Home.

for ORAHOME in `f_getuniq "$OH"`

do

# Get the standard audit directory if present.

if [ -d $ORAHOME/rdbms/audit ]; then

ADUMPDIRS="$ADUMPDIRS $ORAHOME/rdbms/audit"

fi

# Get the Cluster Ready Services Daemon (crsd) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/crsd ]; then

CRSLOGDIRS="$CRSLOGDIRS $ORAHOME/log/$HOSTNAME/crsd"

fi

# Get the Oracle Cluster Registry (OCR) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/client ]; then

OCRLOGDIRS="$OCRLOGDIRS $ORAHOME/log/$HOSTNAME/client"

fi

# Get the Cluster Synchronization Services (CSS) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/cssd ]; then

CSSLOGDIRS="$CSSLOGDIRS $ORAHOME/log/$HOSTNAME/cssd"

fi

# Get the Event Manager (EVM) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/evmd ]; then

EVMLOGDIRS="$EVMLOGDIRS $ORAHOME/log/$HOSTNAME/evmd"

fi

# Get the RACG log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/racg ]; then

RACGLOGDIRS="$RACGLOGDIRS $ORAHOME/log/$HOSTNAME/racg"

fi

done

# Clean the audit_dump_dest directories.

if [ ! -z "$ADUMPDIRS" ]; then

for DIR in `f_getuniq "$ADUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Audit Dump Directory: $DIR"

find $DIR -type f -name "*.aud" -mtime +$ADAYS -exec $RM {} \; 2>/dev/null

fi

done

fi

# Clean the background_dump_dest directories.

if [ ! -z "$BDUMPDIRS" ]; then

for DIR in `f_getuniq "$BDUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Background Dump Destination Directory: $DIR"

# Clean up old trace files.

find $DIR -type f -name "*.tr[c,m]" -mtime +$BDAYS -exec $RM {} \; 2>/dev/null

find $DIR -type d -name "cdmp*" -mtime +$BDAYS -exec $RMDIR {} \; 2>/dev/null

fi

if [ -d $DIR ]; then

# Cut the alert log and clean old ones.

for f in `find $DIR -type f -name "alert\_*.log" ! -name "alert_[0-9A-Z]*.[0-9]*.log" 2>/dev/null`; do

echo "Alert Log: $f"

f_cutlog $f

f_deletelog $f $BDAYS

done

fi

done

fi

# Clean the core_dump_dest directories.

if [ ! -z "$CDUMPDIRS" ]; then

for DIR in `f_getuniq "$CDUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Core Dump Destination: $DIR"

find $DIR -type d -name "core*" -mtime +$CDAYS -exec $RMDIR {} \; 2>/dev/null

fi

done

fi

# Clean the user_dump_dest directories.

if [ ! -z "$UDUMPDIRS" ]; then

for DIR in `f_getuniq "$UDUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning User Dump Destination: $DIR"

find $DIR -type f -name "*.trc" -mtime +$UDAYS -exec $RM {} \; 2>/dev/null

fi

done

fi

# Cluster Ready Services Daemon (crsd) Log Files

for DIR in `f_getuniq "$CRSLOGDIRS $OCRLOGDIRS $CSSLOGDIRS $EVMLOGDIRS $RACGLOGDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Clusterware Directory: $DIR"

find $DIR -type f -name "*.log" -mtime +$RDAYS -exec $RM {} \; 2>/dev/null

fi

done

# Clean Listener Log Files.

# Get the list of running listeners. It is assumed that if the listener is not running, the log file does not need to be cut.

ps -e -o args | grep tnslsnr | grep -v grep | while read LSNR; do

# Derive the lsnrctl path from the tnslsnr process path.

TNSLSNR=`echo $LSNR | awk '{print $1}'`

ORACLE_PATH=`dirname $TNSLSNR`

ORACLE_HOME=`dirname $ORACLE_PATH`

PATH=$ORACLE_PATH:$ORIGPATH

LD_LIBRARY_PATH=$ORACLE_HOME/lib:$ORIGLD

LSNRCTL=$ORACLE_PATH/lsnrctl

echo "Listener Control Command: $LSNRCTL"

# Derive the listener name from the running process.

LSNRNAME=`echo $LSNR | awk '{print $2}' | tr "[:upper:]" "[:lower:]"`

echo "Listener Name: $LSNRNAME"

# Get the listener version.

LSNRVER=`$LSNRCTL version | grep "LSNRCTL" | grep "Version" | awk '{print $5}' | awk -F. '{print $1}'`

echo "Listener Version: $LSNRVER"

# Get the TNS_ADMIN variable.

echo "Initial TNS_ADMIN: $TNS_ADMIN"

unset TNS_ADMIN

TNS_ADMIN=`$LSNRCTL status $LSNRNAME | grep "Listener Parameter File" | awk '{print $4}'`

if [ ! -z $TNS_ADMIN ]; then

export TNS_ADMIN=`dirname $TNS_ADMIN`

else

export TNS_ADMIN=$ORACLE_HOME/network/admin

fi

echo "Network Admin Directory: $TNS_ADMIN"

# If the listener is 11g, get the diagnostic dest, etc...

if [ $LSNRVER -ge 11 ]; then

# Get the listener log file directory.

LSNRDIAG=`$LSNRCTL<<EOF | grep log_directory | awk '{print $6}'

set current_listener $LSNRNAME

show log_directory

EOF`

echo "Listener Diagnostic Directory: $LSNRDIAG"

# Get the listener trace file name.

LSNRLOG=`lsnrctl<<EOF | grep trc_directory | awk '{print $6"/"$1".log"}'

set current_listener $LSNRNAME

show trc_directory

EOF`

echo "Listener Log File: $LSNRLOG"

# If 10g or lower, do not use diagnostic dest.

else

# Get the listener log file location.

LSNRLOG=`$LSNRCTL status $LSNRNAME | grep "Listener Log File" | awk '{print $4}'`

fi

# See if the listener is logging.

if [ -z "$LSNRLOG" ]; then

echo "Listener Logging is OFF. Not rotating the listener log."

# See if the listener log exists.

elif [ ! -r "$LSNRLOG" ]; then

echo "Listener Log Does Not Exist: $LSNRLOG"

# See if the listener log has been cut today.

elif [ -f $LSNRLOG.$TODAY ]; then

echo "Listener Log Already Cut Today: $LSNRLOG.$TODAY"

# Cut the listener log if the previous two conditions were not met.

else

# Remove old 11g+ listener log XML files.

if [ ! -z "$LSNRDIAG" ] && [ -d "$LSNRDIAG" ]; then

echo "Cleaning Listener Diagnostic Dest: $LSNRDIAG"

find $LSNRDIAG -type f -name "log\_[0-9]*.xml" -mtime +$NDAYS -exec $RM {} \; 2>/dev/null

fi

# Disable logging.

$LSNRCTL <<EOF

set current_listener $LSNRNAME

set log_status off

EOF

# Cut the listener log file.

f_cutlog $LSNRLOG

# Enable logging.

$LSNRCTL <<EOF

set current_listener $LSNRNAME

set log_status on

EOF

# Delete old listener logs.

f_deletelog $LSNRLOG $NDAYS

fi

done

echo "`basename $0` Finished `date`."

exit

在crontab中设置一个作业,每天晚上凌晨零点运行这个脚本,日志文件保留31天。

00 00 * * * /home/oracle/_cron/cls_oracle/cls_oracle.sh -d 31 > /home/oracle/_cron/cls_oracle/cls_oracle.sh.log 2>&1 智能推荐

FTP命令字和返回码_ftp 登录返回230-程序员宅基地

文章浏览阅读3.5k次,点赞2次,收藏13次。为了从FTP服务器下载文件,需要要实现一个简单的FTP客户端。FTP(文件传输协议) 是 TCP/IP 协议组中的应用层协议。FTP协议使用字符串格式命令字,每条命令都是一行字符串,以“\r\n”结尾。客户端发送格式是:命令+空格+参数+"\r\n"的格式服务器返回格式是以:状态码+空格+提示字符串+"\r\n"的格式,代码只要解析状态码就可以了。读写文件需要登陆服务器,特殊用..._ftp 登录返回230

centos7安装rabbitmq3.6.5_centos7 安装rabbitmq3.6.5-程序员宅基地

文章浏览阅读648次。前提:systemctl stop firewalld 关闭防火墙关闭selinux查看getenforce临时关闭setenforce 0永久关闭sed-i'/SELINUX/s/enforcing/disabled/'/etc/selinux/configselinux的三种模式enforcing:强制模式,SELinux 运作中,且已经正确的开始限制..._centos7 安装rabbitmq3.6.5

idea导入android工程,idea怎样导入Android studio 项目?-程序员宅基地

文章浏览阅读5.8k次。满意答案s55f2avsx2017.09.05采纳率:46%等级:12已帮助:5646人新版Android Studio/IntelliJ IDEA可以直接导入eclipse项目,不再推荐使用eclipse导出gradle的方式2启动Android Studio/IntelliJ IDEA,选择 import project3选择eclipse 项目4选择 create project f..._android studio 项目导入idea 看不懂安卓项目

浅谈AI大模型技术:概念、发展和应用_ai大模型应用开发-程序员宅基地

文章浏览阅读860次,点赞2次,收藏6次。AI大模型技术已经在自然语言处理、计算机视觉、多模态交互等领域取得了显著的进展和成果,同时也引发了一系列新的挑战和问题,如数据质量、计算效率、知识可解释性、安全可靠性等。城市运维涉及到多个方面,如交通管理、环境监测、公共安全、社会治理等,它们需要处理和分析大量的多模态数据,如图像、视频、语音、文本等,并根据不同的场景和需求,提供合适的决策和响应。知识搜索有多种形式,如语义搜索、对话搜索、图像搜索、视频搜索等,它们可以根据用户的输入和意图,从海量的数据源中检索出最相关的信息,并以友好的方式呈现给用户。_ai大模型应用开发

非常详细的阻抗测试基础知识_阻抗实部和虚部-程序员宅基地

文章浏览阅读8.2k次,点赞12次,收藏121次。为什么要测量阻抗呢?阻抗能代表什么?阻抗测量的注意事项... ...很多人可能会带着一系列的问题来阅读本文。不管是数字电路工程师还是射频工程师,都在关注各类器件的阻抗,本文非常值得一读。全文13000多字,认真读完大概需要2小时。一、阻抗测试基本概念阻抗定义:阻抗是元器件或电路对周期的交流信号的总的反作用。AC 交流测试信号 (幅度和频率)。包括实部和虚部。图1 阻抗的定义阻抗是评测电路、元件以及制作元件材料的重要参数。那么什么是阻抗呢?让我们先来看一下阻抗的定义。首先阻抗是一个矢量。通常,阻抗是_阻抗实部和虚部

小学生python游戏编程arcade----基本知识1_arcade语言 like-程序员宅基地

文章浏览阅读955次。前面章节分享试用了pyzero,pygame但随着想增加更丰富的游戏内容,好多还要进行自己编写类,从今天开始解绍一个新的python游戏库arcade模块。通过此次的《连连看》游戏实现,让我对swing的相关知识有了进一步的了解,对java这门语言也有了比以前更深刻的认识。java的一些基本语法,比如数据类型、运算符、程序流程控制和数组等,理解更加透彻。java最核心的核心就是面向对象思想,对于这一个概念,终于悟到了一些。_arcade语言 like

随便推点

【增强版短视频去水印源码】去水印微信小程序+去水印软件源码_去水印机要增强版-程序员宅基地

文章浏览阅读1.1k次。源码简介与安装说明:2021增强版短视频去水印源码 去水印微信小程序源码网站 去水印软件源码安装环境(需要材料):备案域名–服务器安装宝塔-安装 Nginx 或者 Apachephp5.6 以上-安装 sg11 插件小程序已自带解析接口,支持全网主流短视频平台,搭建好了就能用注:接口是公益的,那么多人用解析慢是肯定的,前段和后端源码已经打包,上传服务器之后在配置文件修改数据库密码。然后输入自己的域名,进入后台,创建小程序,输入自己的小程序配置即可安装说明:上传源码,修改data/_去水印机要增强版

verilog进阶语法-触发器原语_fdre #(.init(1'b0) // initial value of register (1-程序员宅基地

文章浏览阅读557次。1. 触发器是FPGA存储数据的基本单元2. 触发器作为时序逻辑的基本元件,官方提供了丰富的配置方式,以适应各种可能的应用场景。_fdre #(.init(1'b0) // initial value of register (1'b0 or 1'b1) ) fdce_osc (

嵌入式面试/笔试C相关总结_嵌入式面试笔试c语言知识点-程序员宅基地

文章浏览阅读560次。本该是不同编译器结果不同,但是尝试了g++ msvc都是先计算c,再计算b,最后得到a+b+c是经过赋值以后的b和c参与计算而不是6。由上表可知,将q复制到p数组可以表示为:*p++=*q++,*优先级高,先取到对应q数组的值,然后两个++都是在后面,该行运算完后执行++。在电脑端编译完后会分为text data bss三种,其中text为可执行程序,data为初始化过的ro+rw变量,bss为未初始化或初始化为0变量。_嵌入式面试笔试c语言知识点

57 Things I've Learned Founding 3 Tech Companies_mature-程序员宅基地

文章浏览阅读2.3k次。57 Things I've Learned Founding 3 Tech CompaniesJason Goldberg, Betashop | Oct. 29, 2010, 1:29 PMI’ve been founding andhelping run techn_mature

一个脚本搞定文件合并去重,大数据处理,可以合并几个G以上的文件_python 超大文本合并-程序员宅基地

文章浏览阅读1.9k次。问题:先讲下需求,有若干个文本文件(txt或者csv文件等),每行代表一条数据,现在希望能合并成 1 个文本文件,且需要去除重复行。分析:一向奉行简单原则,如无必要,绝不复杂。如果数据量不大,那么如下两条命令就可以搞定合并:cat a.txt >> new.txtcat b.txt >> new.txt……去重:cat new...._python 超大文本合并

支付宝小程序iOS端过渡页DFLoadingPageRootController分析_类似支付宝页面过度加载页-程序员宅基地

文章浏览阅读489次。这个过渡页是第一次打开小程序展示的,点击某个小程序前把手机的开发者->network link conditioner->enable & very bad network 就会在停在此页。比如《支付宝运动》这个小程序先看这个类的.h可以看到它继承于DTViewController点击左上角返回的方法- (void)back;#import "DTViewController.h"#import "APBaseLoadingV..._类似支付宝页面过度加载页